Project Overview

When I started this project, I wanted to answer a simple but meaningful question: what actually makes a marriage satisfying? I did not want to stop at surface-level assumptions like age, number of children, or years married. I wanted to see whether emotional connection, cultural values, religion, or demographics had the strongest influence.

To do that, I worked with a global dataset of 7,178 married individuals from 45 countries. I cleaned the dataset, explored patterns visually, engineered new features, and trained several machine learning models to predict marital satisfaction.

What made this project especially interesting to me is that it combined something very human with something very technical. On one side, this project is about relationships, emotions, and satisfaction. On the other side, it is also about data cleaning, feature engineering, model comparison, and interpretation. I wanted to see whether the data would support what people often say in real life: that strong relationships are built more on emotional connection than on background factors.

The Dataset

The dataset included responses from married people across different countries and cultures. It gave a wide view of marriage by combining relationship, demographic, and cultural information in one place.

Demographics

Age, sex, education, number of children, years married, and other background variables.

MRQ

Questions measuring relationship quality, including love, pride, attraction, romance, and enjoyment.

KMSS

A 1–7 marital satisfaction scale used as the main target for the project.

GLOBE

Cultural orientation questions measuring family and social expectations.

In simple terms, this dataset gave me a chance to look at marriage from several angles at once. I was not only looking at who people are, but also at how they feel in their relationship and what kind of cultural values surround that relationship. That made it a strong dataset for asking broader questions about satisfaction.

How I Cleaned and Prepared the Data

Before doing any analysis, I had to make the data usable. The raw file included extra non-data rows, inconsistent column names, and missing values. So my preprocessing pipeline focused on making the data structured and model-ready.

- Removed non-data header rows

- Renamed all columns into clean labels

- Converted ordinal survey values into numeric form

- Used median imputation for missing numeric values

- Created a binary target variable for satisfaction

I also created two summary features: mrq_mean for overall relationship quality and globe_mean for overall cultural orientation.

This step mattered a lot because machine learning models do not understand messy raw survey data the way people do. They need structured numbers. So part of the job was turning human responses into something the models could read while still preserving the meaning of the original survey.

Exploratory Data Analysis

Before building any models, I explored the data visually to understand the overall patterns. This helped me see whether certain variables stood out early and whether the dataset had any issues that could affect model performance.

One of the first things I noticed was that the dataset was imbalanced, since most people reported being satisfied. That matters because a model can sometimes look accurate just by predicting the majority class, so I had to pay attention to more than just accuracy.

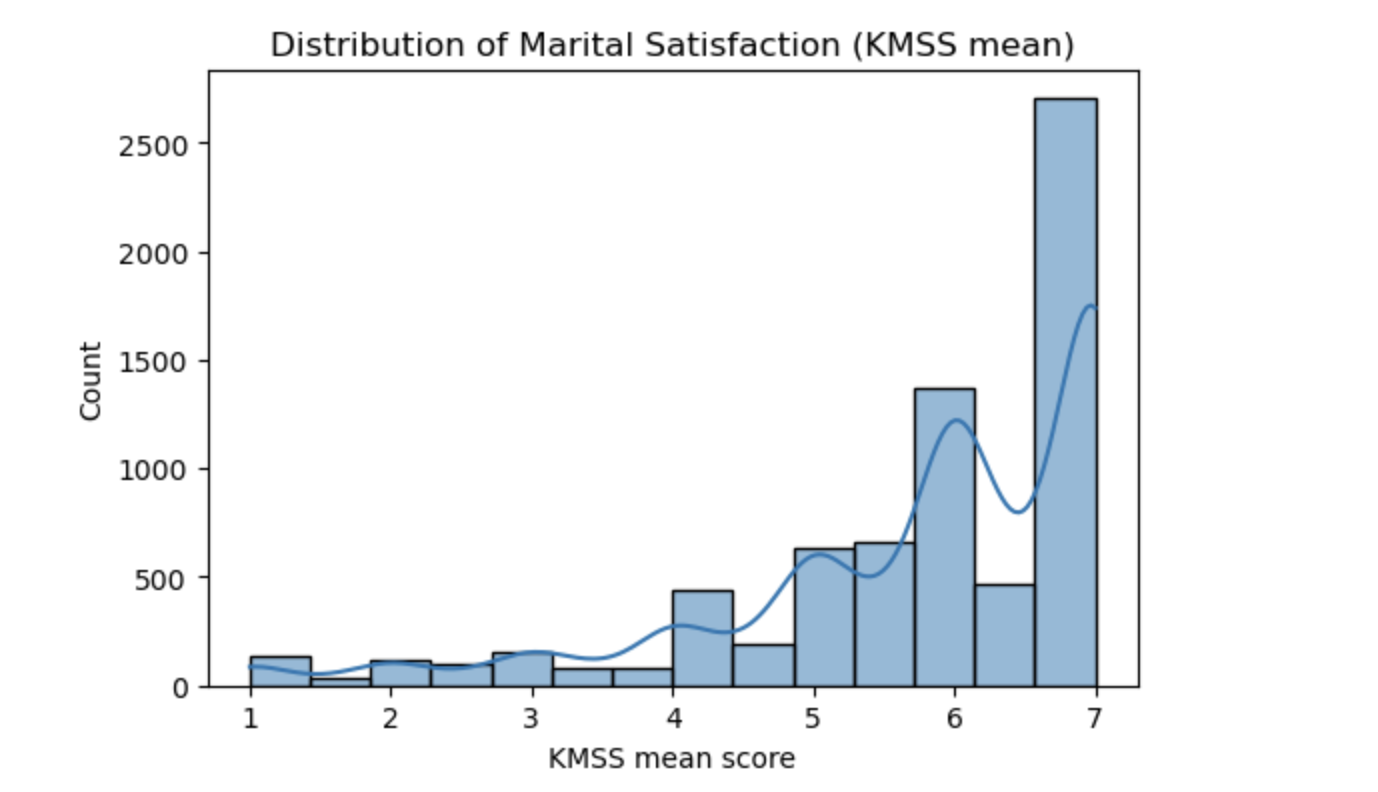

The histogram below shows the distribution of the marital satisfaction score, which was based on the KMSS mean. On the x-axis, the scores go from about 1 to 7, where lower numbers mean lower satisfaction and higher numbers mean higher satisfaction. On the y-axis, the graph shows how many people fall into each score range.

What stands out immediately is that the graph is much heavier on the right side, especially around 6 and 7. That means a large portion of the participants in this dataset reported being fairly satisfied or very satisfied in their marriage. There are still people with lower scores, but there are far fewer of them. This is why I describe the data as imbalanced: there are many more satisfied participants than dissatisfied ones.

This graph is important because it gives context for the rest of the project. It tells me that I am not working with a dataset where happy and unhappy marriages are evenly split. Instead, most people lean toward the satisfied side. That influences how I interpret model performance later, especially metrics like recall and F1-score.

Distribution of marital satisfaction scores based on the KMSS mean. Most scores cluster between 5 and 7, showing that the majority of participants reported being satisfied in their marriage.

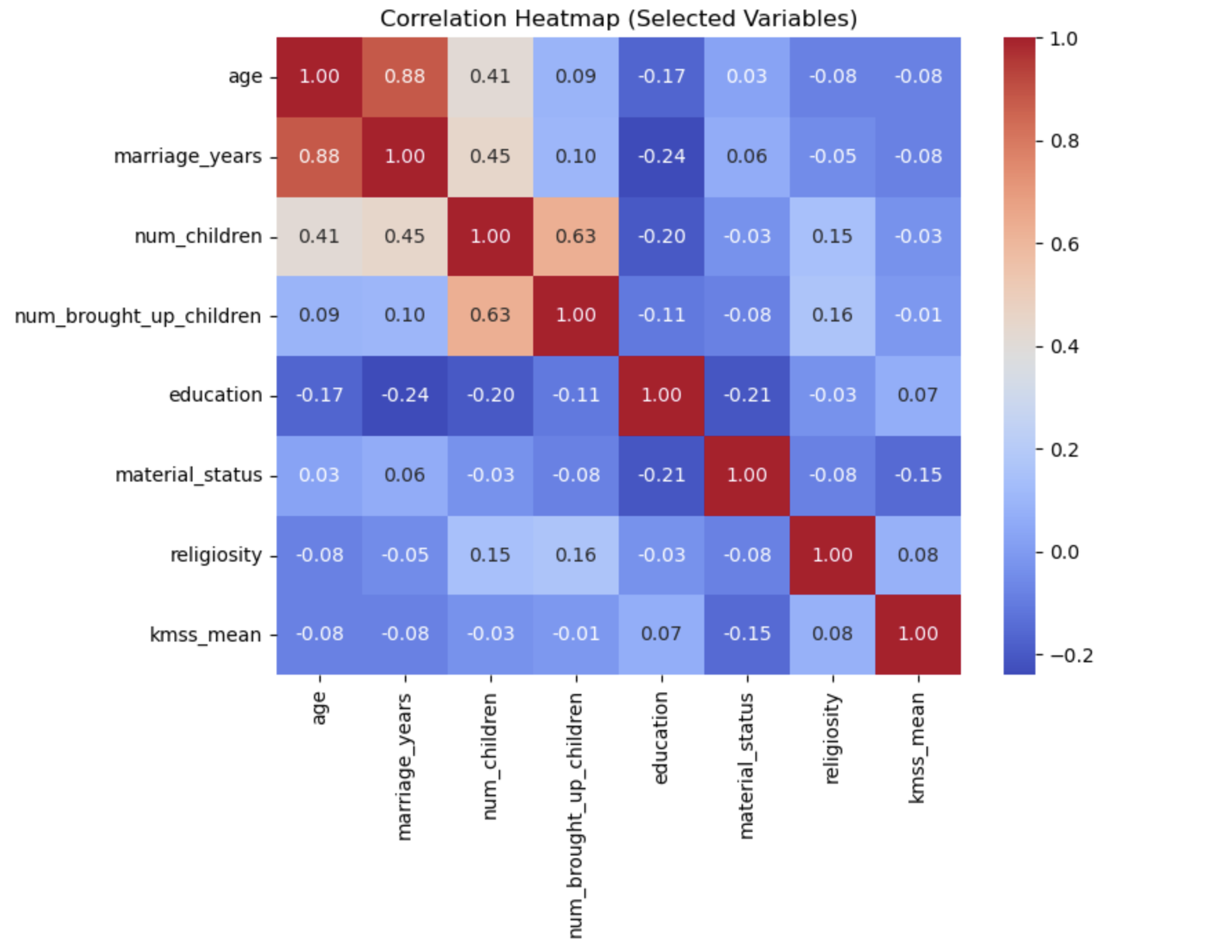

I also used a correlation heatmap to look at how the main numeric variables relate to one another. A correlation asks whether two variables tend to move together. Values close to 1 mean a strong positive relationship, values close to 0 mean little or no relationship, and negative values mean one tends to go up while the other goes down.

The heatmap showed that some variables are related in obvious ways. For example, age and marriage_years have a strong positive correlation of 0.88, which makes sense because older participants are often more likely to have been married longer. Likewise, num_children and num_brought_up_children are also strongly related.

But the most important part of the heatmap is what it says about kmss_mean, which is the marital satisfaction score.

Its relationships with most demographic variables are weak. Age, years married, number of children, and education all show

very small correlations with satisfaction. That told me early on that demographic background alone does not explain marital

satisfaction very well in this dataset.

In very simple terms, this heatmap showed me that just knowing someone’s age, how many kids they have, or how long they have been married does not tell me much about whether they are happy in their marriage. That pushed me to pay much closer attention to the relationship-quality variables.

Correlation heatmap of selected numeric variables. The key takeaway is that most demographic variables have weak relationships with marital satisfaction, which suggested that relationship-quality variables were likely more important.

Models I Used

To predict marital satisfaction, I trained multiple machine learning models so I could compare their performance instead of relying on just one approach.

- Random Forest for strong nonlinear pattern detection

- Support Vector Machine (SVM) for class separation

- K-Nearest Neighbors (KNN) for similarity-based prediction

- Decision Tree for interpretability

- Linear Regression for predicting the continuous satisfaction score

I evaluated the classification models using accuracy, precision, recall, F1-score, and ROC-AUC. For regression, I used MAE, RMSE, and R2.

I wanted to compare different styles of models because each one learns patterns differently. Some models are better at handling nonlinear relationships, some are better for interpretability, and some work better when the data has certain structures. Comparing them helped me understand not only which model performed best, but also how stable the overall patterns were across methods.

Results

The best-performing model was Random Forest. It achieved an accuracy of 88.5%, an F1-score of 0.932, and an AUC of 0.883. SVM performed almost just as well, while KNN and Decision Tree were slightly weaker.

Random Forest

Accuracy: 0.885

F1-score: 0.932

AUC: 0.883

SVM

Accuracy: 0.883

F1-score: 0.931

AUC: 0.846

KNN

Accuracy: 0.872

F1-score: 0.925

AUC: 0.808

Decision Tree

Accuracy: 0.824

F1-score: 0.892

AUC: 0.714

These results told me that the dataset had meaningful enough patterns for machine learning to detect. In other words, the models were not just guessing randomly. They were learning real structure from the data. Random Forest stood out because it handled the mix of emotional, demographic, and cultural variables especially well.

For the regression model, Linear Regression explained about 48.4% of the variation in marital satisfaction, with predictions landing within about one point of the real score on average. That is not perfect, but it is still meaningful, especially because marital satisfaction is a complex human outcome influenced by many factors that are not always captured in a survey.

What Actually Predicted Marital Satisfaction?

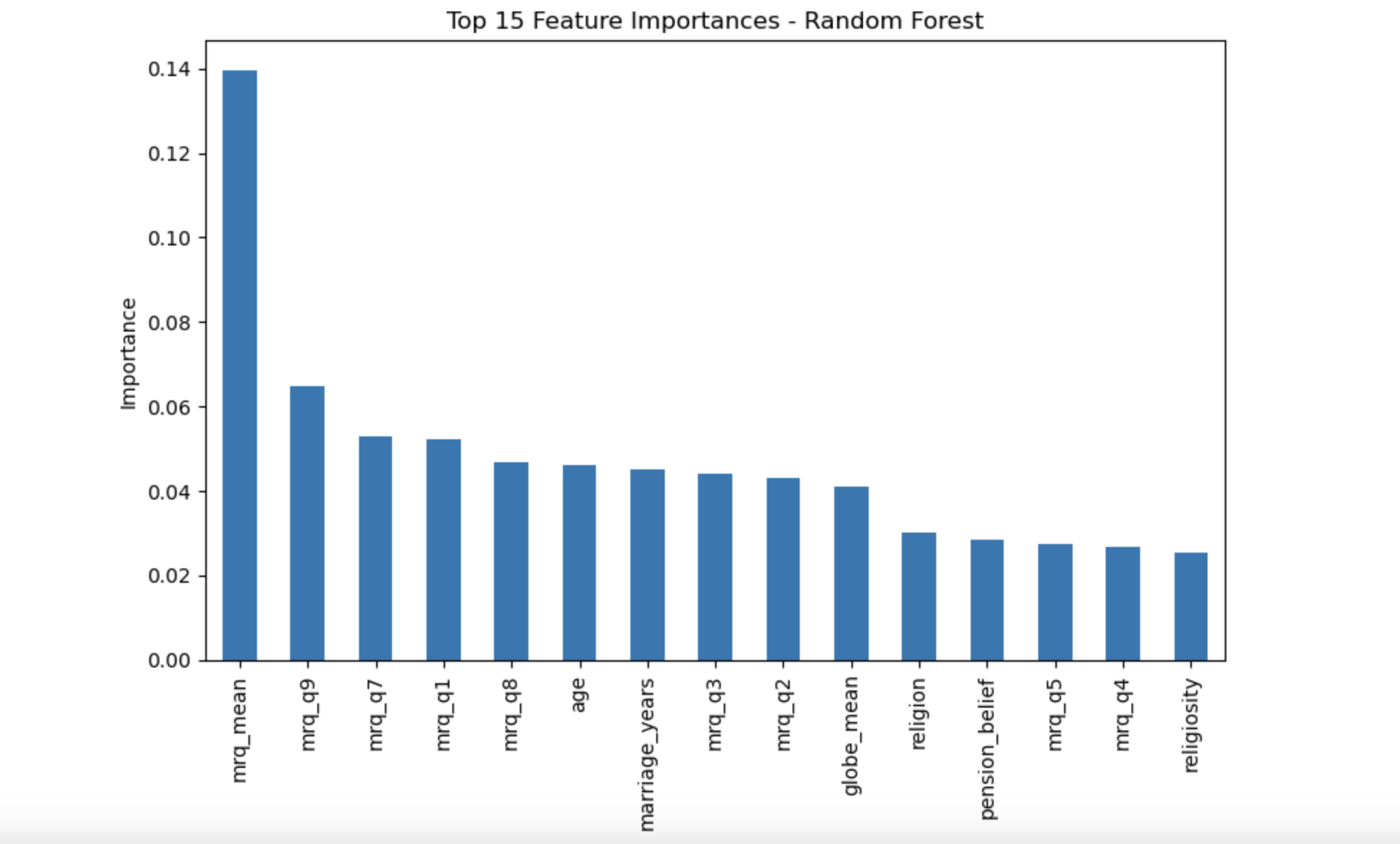

After identifying the best-performing model, I wanted to understand why it performed well and which variables were actually driving the predictions. To answer that, I looked at the Random Forest feature importance chart.

Feature importance tells us how useful each variable was when the model tried to predict marital satisfaction. The taller the bar, the more the model relied on that variable. So this graph is one of the clearest parts of the entire project, because it directly shows what mattered most.

The strongest predictor by far was relationship quality. The overall MRQ score, shown as

mrq_mean, is the tallest bar on the chart. This means the model relied on that variable more than any other.

Since mrq_mean summarizes emotional and relational quality across multiple questions, this tells me that

the overall emotional health of the relationship is the most powerful predictor of marital satisfaction.

On top of that, several individual MRQ items also appear high on the chart, including variables related to love, pride, attraction, enjoyment, and romance. This is important because it shows that the result is not coming from just one single question. Instead, multiple emotional dimensions of the relationship are all contributing to satisfaction.

Age and years married appear in the middle of the chart, which means they had some influence, but they were still much less

important than the relationship-quality variables. Cultural values, summarized by globe_mean, had modest influence.

Religion and religiosity also appear, but much lower than the emotional variables.

This graph really helped tie the entire project together. The heatmap had already suggested that demographics were weak. This chart confirms it more directly by showing that emotional connection is what the model depends on most when it makes predictions.

Random Forest feature importance chart. Relationship-quality variables, especially mrq_mean,

were the strongest predictors of marital satisfaction, far above demographic variables like age, religion, or years married.

Religion and Satisfaction

Even though religion was only a weak overall predictor in the machine learning model, I still wanted to break down average marital satisfaction by religion to see whether any meaningful group differences existed.

- Highest average satisfaction: Evangelic, Hinduism, Protestant

- Lowest average satisfaction: Jewish, Buddhist

This part of the analysis helped me make an important distinction. A variable can have low predictive importance overall and still show noticeable differences when you compare group averages. That is what happened here with religion.

In the Random Forest chart, religion was not one of the dominant features. That means it did not drive the model strongly compared to relationship-quality variables. But when I grouped the data by religion and looked at average KMSS scores, I still found visible differences between groups.

So I would describe religion as a variable with small overall influence but some meaningful group-level differences. It matters much less than emotional connection, but it is not completely flat either.

Final Insight

The clearest lesson from this project is that emotional connection is the biggest driver of marital satisfaction. Love, pride, romance, attraction, and enjoyment consistently mattered more than age, education, number of children, or religion.

In other words, it is not mainly about who the couple is on paper. It is more about how they experience the relationship itself.

That was the most meaningful part of this project for me. The data supported something that feels intuitive in real life: marriages are shaped much more by the emotional quality of the relationship than by surface-level background characteristics. The machine learning models made that idea measurable and visible.